Running OpenClaw AI

Three tradeoffs I did not expect

I’ve been living with an OpenClaw AI agent for a few weeks now, and I’ve had some mixed emotions. It’s been useful and sometimes surprising. It’s also been frustrating and occasionally expensive. I’ll describe three areas where I don’t have clean answers but rather just different flavors of compromise.

Security vs. Resilience: The Disk Encryption Problem

We recently experienced a somewhat unplanned power outage. My condo building is replacing the HVAC system. The contractors gave us a rough schedule of the work but not the specifics of when they were going to shut off our power to do the necessary rewiring of our new in-condo HVAC unit back to our electrical panel.

One afternoon I was at my office and tried to message OpenClaw. Nothing. The power had come back on after the crews left, but my Mac Mini that I use for OpenClaw was sitting at the login screen waiting for me to enter my FileVault password. Like any good security-minded person, I had full disk encryption enabled. The machine was secure. It was also useless until I got home, plugged in a monitor and keyboard, and typed in my credentials.

The tradeoff was obvious in retrospect. I had optimized for the edge case of someone breaking into my condo and stealing the Mac. What I had actually optimized for was unavailability during the far more common scenario of a power interruption. I could have set up remote SSH access from a secure jump host to enter the decryption password, but that would have introduced monitoring infrastructure and network security complexity I didn’t want to maintain. I could have disabled FileVault entirely. Both options felt like exchanging one risk for another, just in different proportions.

I ended up removing the password requirement and accepting the physical security risk. The Mac now auto-restarts when power returns. My digital assistant is available when I need it, which turns out to be the more common case than theft. But I think about this decision more than I expected. It is a small example of how we constantly trade security for convenience, often without noticing until the system fails us at the wrong moment.

Structure vs. Flexibility: The Scheduling Problem

I tried something that seemed clever. I added OpenClaw to my group iMessage and WhatsApp threads to help coordinate Happy Hour times. The theory was that an AI assistant could negotiate availability conversationally, handle the back-and-forth, and spare me the administrative overhead.

The reality was messier. The moment people realized there was a bot in the chat, they wanted to play with it. Some were testing its boundaries. Others were genuinely curious. A few were just burning through my AI credits with idle chatter. I found myself paying for other people’s entertainment.

The deeper issue was that unstructured conversation created ambiguity where I wanted clarity. With a Doodle poll or Calendly link, everyone sees the same constraints. The options are explicit. There is no drift, no negotiation, no performative engagement with novel technology. People understand the workflow because they have done it before. The structure is the feature.

I have since locked down the group chats. Now only I can command OpenClaw, and its responses are read-only for everyone else. This experience clarified for me that there are just certain tasks where structure serves us better than flexibility. Familiar workflows with defined steps benefit from the rigidity and familiarity of SaaS tools like Calendly or Doodle. Unstructured data analysis, like extracting key points from a YouTube video or answering open-ended questions about content, is where AI shines. The mistake is assuming that the more sophisticated solution is better in every context. Sometimes a form field is exactly what is needed.

Cloud vs. Edge: The Cost Problem

What surprised me most about OpenClaw was how quickly it became cost-conscious. I did not program this explicitly. I mentioned a few times that I was worried about AI credit consumption, particularly after a bug in some error-handling code created a false positive loop that chewed through my budget diagnosing and fixing itself. After that, the system started recommending local alternatives whenever possible.

Now when I ask it to process a PDF, it uses a version of OCRmyPDF installed locally rather than letting cloud AI do the work. When I want to analyze a YouTube video, it downloads the file with yt-dlp and processes it on the Mac rather than streaming analysis through a cloud API. Speech-to-text happens locally. These are small decisions in isolation, but they add up.

I would prefer to run more of this locally. There an are OpenClaw issues preventing smooth integration with local models, so I am currently using Kimi 2.5 from Moonshot AI. I would accept slower responses to keep my queries from training someone else’s model. The cost matters, but so does the privacy and the autonomy. This is not about speed for me. Most of what I do with OpenClaw does not require real-time response. I am happy to schedule tasks for the future as long as the system does not create conflicting cron jobs that retry and fail and retry again, which happened once and taught me to be careful about overlapping automations.

The question here is about where intelligence should live. Cloud AI is powerful but dependent, both on connectivity and on the business models of providers who may change terms or pricing without warning. Edge AI is constrained by local hardware and more limited models, but it is autonomous in ways that matter for long-term sustainability. I am finding that my preference leans toward the edge, even when it means accepting slower or less capable responses. The tradeoff feels worth it, though I am aware this preference is shaped by my own risk tolerance and technical setup.

What I am learning

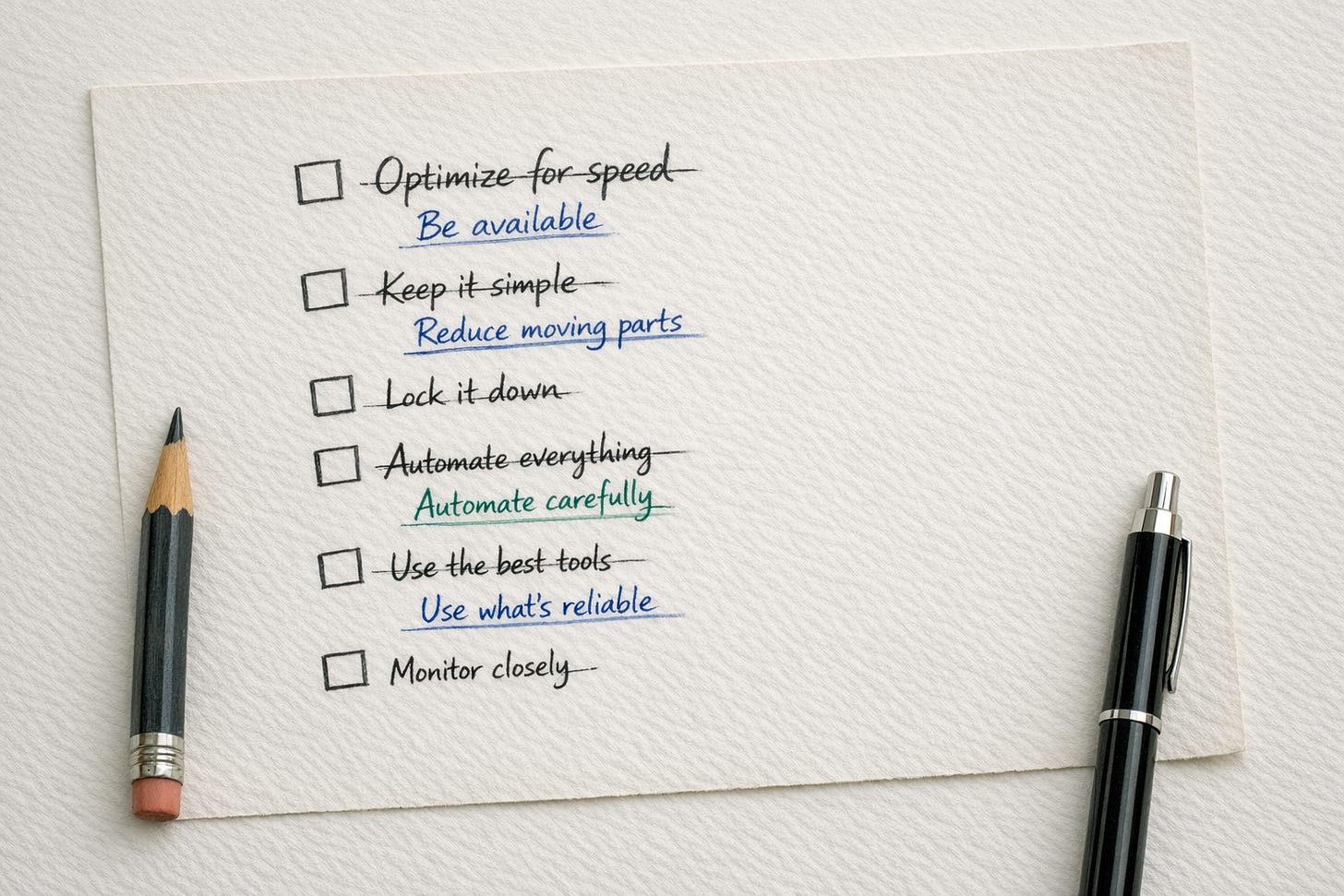

These three problems look different on the surface, but they share a common structure. Each involves a tension between an ideal state and practical constraints. Security versus availability. Flexibility versus clarity. Capability versus autonomy. In each case, the “right” answer depends on context that changes over time.

I am also noticing how my own behavior shapes the system. My complaints about cost trained OpenClaw to be frugal. My reaction to the group chat chaos led to stricter access controls. The AI is learning my preferences, but I am also learning what I actually value through the friction of using it. This is not a story about AI solving problems. It is a story about discovering where the real problems are.

The unifying thread is that optimization requires knowing what I am optimizing for. I thought I wanted security, flexibility, and capability. What I actually needed was availability, clarity, and autonomy. The gap between those two lists is where the interesting decisions live. I do not think there are universal answers here. Just different flavors of compromise, and the ongoing work of noticing which ones I have chosen.

AI Disclosure: I used my OpenClaw agent to draft this post based on my prompts and ideas. I edited it and stand behind the final version. If you are interested in how I trained OpenClaw to sound like me when writing, I made a YouTube video about how I did this.

Interesting, thanks for sharing. I was toying with the idea of trying out OpenClaw on a Raspberry Pi, but was worried about the time I would burn through on such a project. Thanks for scratching my OpenClaw itch.