On Repeating Patterns

A small story about AI, fabric, and writing things down precisely

Unlike many of my posts about my psychotherapy journey, this post isn’t about repeating behavioral patterns. It’s literally about a repeating fabric pattern. Marsha asked me this past weekend if AI could help her design a fabric pattern that she could upload to Spoonflower. It turns out that this task wasn’t as straightforward as I thought it would be.

The requirement sounded simple enough. Spoonflower wants designs built as 8 inch by 8 inch tiles that repeat cleanly across fabric. If the tile repeats correctly, the fabric looks continuous. If it doesn’t, the cloth looks more like patchwork.

My struggle with image-based AI

At first, I assumed this was exactly the kind of thing AI would be good at. It wasn’t.

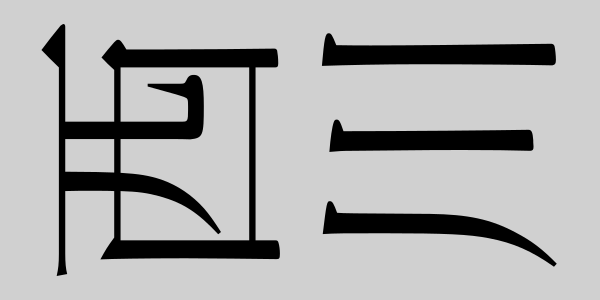

In fairness, I could get AI to generate patterns that looked somewhat nice on their own. The moment I tried repeating them, problems showed up. Seams didn’t line up. Elements drifted. Rotations flipped unpredictably. Japanese characters got subtly omitted. Marsha was the one who kept noticing small artifacts that I didn’t catch at first.

That’s when it clicked that this wasn’t just an art problem. It was also a math problem.

A repeating fabric tile isn’t just an image. It’s a constraint system. The left edge has to match the right edge. The top has to match the bottom. Rotations and spacing have to obey rules. “Almost right” isn’t actually right for an 8 inch square tiled across a whole yard of fabric.

I really struggled with image-based AI. When I would ask AI to design a pattern, I was really asking for something that looks like a pattern it has seen before. It’s pattern-matching, not enforcing guarantees. It doesn’t actually care whether the design survives repetition. The AI cares about whether it did its best to respond to the prompt, independent of the result.

Every time I “refined” the prompt, one problem would go away and another would pop up. Characters would rotate individually instead of as a group. Pairings would break. Artifacts would creep in. Marsha would point at the screen and say, “That’s not right,” and she’d be correct.

The Herringbone example

As I was considering what type of repeating patterns to feed to AI, a common one used in tiling, Herringbone, seemed appropriate.

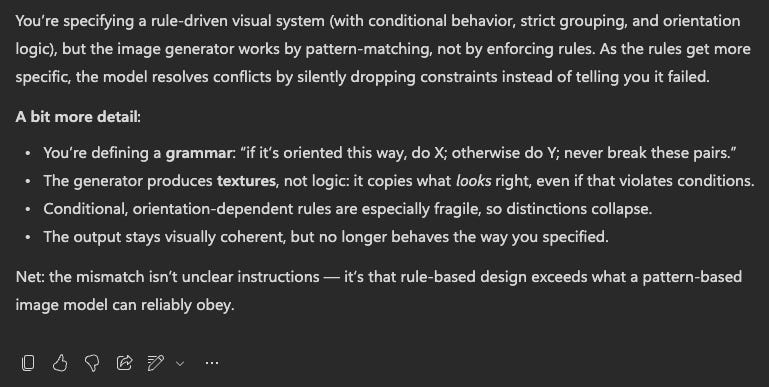

I thought using a “brick” containing vertically stacked characters for Marsha’s maiden name (“Nishikawa” or 西 川) in two different colors could work really well when laid out in this pattern.

After MANY attempts (and way more time than it would have taken me to manually lay out what I wanted in a graphics program!), I found that AI had some interesting ideas, but none that really matched what I wanted.

The images were somewhat visually interesting, if not a bit random. They didn’t “tile” well into a repeatable pattern. The funny thing is that, despite my insistence otherwise, the AI really wanted to orient all the kanji characters in the same direction on parallel bricks rather than inside a Herringbone, likely to keep the Japanese kanji characters intact.

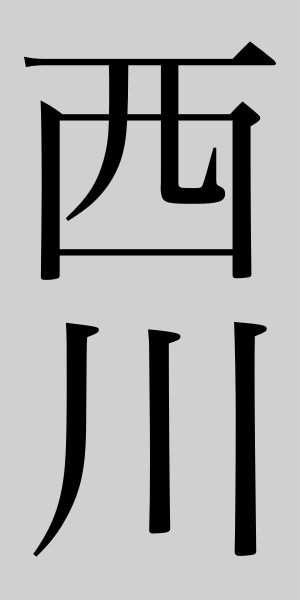

Trying to get the AI to rotate some of the characters resulted in rotating some of them a bit strangely with a horizontal rather than a vertical text orientation and dropping some kanji altogether.

Why this failed

So I asked AI why, after all this heavy prompting, it was failing. It returned multiple screens of analysis that ended with this summary:

Vibe coding to the rescue

After reading the analysis by AI about its own failures, I tried a different approach. Instead of asking image-based AI to compose the pattern, I instead turned to vibe coding. That turned out to be the pivot. The answer wasn’t “try a better prompt.” It was “code the process.”

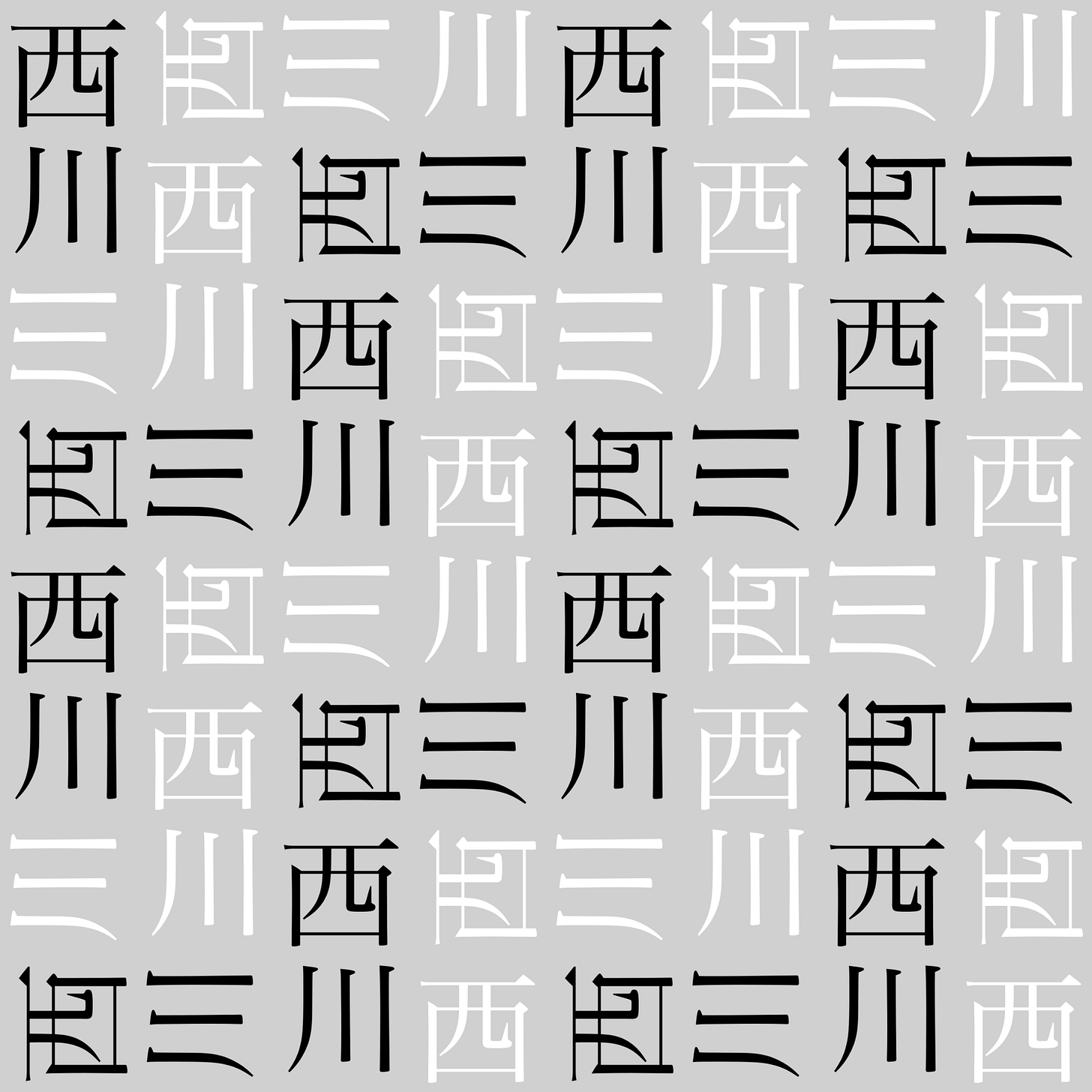

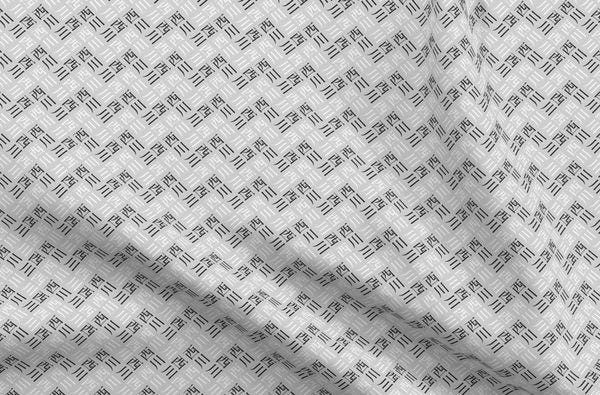

I ended up just vibe coding a Python script to define the “brick”, lay out the bricks deterministically, rotate the layout as a whole instead of individual symbols, crop the container, and export the result at a known scale.

In my case, that meant defining a paired set of Japanese characters as a single brick as well as its counterpart rotated 90 degrees.

Those two bricks were the only building blocks, and my program used an alternating font fill color, as it laid down the bricks both vertically and horizontally to form a repeating tile. My program limited the view window of the graphic so that the portions of bricks outside the tile are hidden.

To complete the herringbone pattern, my program then made a 2x2 matrix of this repeating tile, rotated it by 45 degrees, and cropped a fixed view window that I knew would allow the pattern to repeat cleanly even after rotation. (Admittedly, there was a little bit of math here).

At no point was I asking the AI what herringbone should look like. I was telling it how to form the herringbone. That turned out to be a better way to interact with the AI. The randomness that made image generation frustrating mostly disappeared once I specified the code to generate the image, rather than asking the image-based AI itself to iterate. Moreover, having this implemented in code allowed me to easily tweak the image using different parameters.

When AI works best

This isn’t really about fabric design. It’s more general than that. I’ve written before about large language models being non-deterministic. I’ve used vibe coding in other contexts too, like presenting Doodle-like scheduling workflows instead of letting an AI negotiate time slots with people directly.

What this experiment reinforced for me is that AI is often better at writing the code that produces the outcome rather than actually effecting the outcome itself. When correctness and repeatability matter, I want the AI boxed in by rules.

Vibe coding isn’t about giving up control to AI. It’s about shifting creativity to the right layer. I let the AI write the code for a defined process, rather than to rely on its probabilistic behaviors for image creation.

The proof, in this case, is literally on the cloth. The fabric repeats cleanly, so it’s ready for a future sewing project for Marsha. And I’m left with just a better hands-on understanding of when AI works best.

Simulated preview of the pattern on cloth, generated by Spoonflower.

When I need good aesthetics only, I’ll still use image-based AI. When I need something more constrained, I’ll just use AI to code the rules.

AI disclosure: I used AI tools during the drafting and editing process to help clarify structure and language. All ideas, judgments, and final wording are my own.

Have you tried fixing an old photo and found some really strange oddities? An arm that came out gray while the other was flesh colorized. Faces look sort of close but some features are out of proportion like my nose is a Honda nose, not something else!? A bit more droop in the eyes and it looks like me sort of but it's definitely not a photo that needed noise removal, focus and sharpening? And try to run the 'model' again and it comes up with something different each time!? I could do better with a FFT filter to get the edges and reduce noise. It turned the shadow under my neck into a beard!? And what it did to the Weyerhaeuser logo on my jacket! Ugh! A weird cross in a triangle, then some sort of fish hook in a triangle (at least it got the triangle right but it wasn't our tree in the triangle logo. I even loaded a copy of the logo and told it to use that, just follow the fold of the material for perspective... nope. Any best photo fix AI that doesn't try to make a better ME? Lol!